deeplearning.ai homework:Class 1 Week 4 assignment4_2

Deep Neural Network for Image Classification: Application

When you finish this, you will have finished the last programming assignment of Week 4, and also the last programming assignment of this course!

You will use use the functions you’d implemented in the previous assignment to build a deep network, and apply it to cat vs non-cat classification. Hopefully, you will see an improvement in accuracy relative to your previous logistic regression implementation.

After this assignment you will be able to:

- Build and apply a deep neural network to supervised learning.

Let’s get started!

1 - Packages

Let’s first import all the packages that you will need during this assignment.

- numpy is the fundamental package for scientific computing with Python.

- matplotlib is a library to plot graphs in Python.

- h5py is a common package to interact with a dataset that is stored on an H5 file.

- PIL and scipy are used here to test your model with your own picture at the end.

- dnn_app_utils provides the functions implemented in the “Building your Deep Neural Network: Step by Step” assignment to this notebook.

- np.random.seed(1) is used to keep all the random function calls consistent. It will help us grade your work.

1 | import time |

2 - Dataset

You will use the same “Cat vs non-Cat” dataset as in “Logistic Regression as a Neural Network” (Assignment 2). The model you had built had 70% test accuracy on classifying cats vs non-cats images. Hopefully, your new model will perform a better!

Problem Statement: You are given a dataset (“data.h5”) containing:

- a training set of m_train images labelled as cat (1) or non-cat (0)

- a test set of m_test images labelled as cat and non-cat

- each image is of shape (num_px, num_px, 3) where 3 is for the 3 channels (RGB).

Let’s get more familiar with the dataset. Load the data by running the cell below.

1 | train_x_orig, train_y, test_x_orig, test_y, classes = load_data() |

The following code will show you an image in the dataset. Feel free to change the index and re-run the cell multiple times to see other images.

1 | # Example of a picture |

y = 1. It's a cat picture.

1 | # Explore your dataset |

Number of training examples: 209

Number of testing examples: 50

Each image is of size: (64, 64, 3)

train_x_orig shape: (209, 64, 64, 3)

train_y shape: (1, 209)

test_x_orig shape: (50, 64, 64, 3)

test_y shape: (1, 50)

As usual, you reshape and standardize the images before feeding them to the network. The code is given in the cell below.

1 | # Reshape the training and test examples |

train_x's shape: (12288, 209)

test_x's shape: (12288, 50)

equals which is the size of one reshaped image vector.

3 - Architecture of your model

Now that you are familiar with the dataset, it is time to build a deep neural network to distinguish cat images from non-cat images.

You will build two different models:

- A 2-layer neural network

- An L-layer deep neural network

You will then compare the performance of these models, and also try out different values for .

Let’s look at the two architectures.

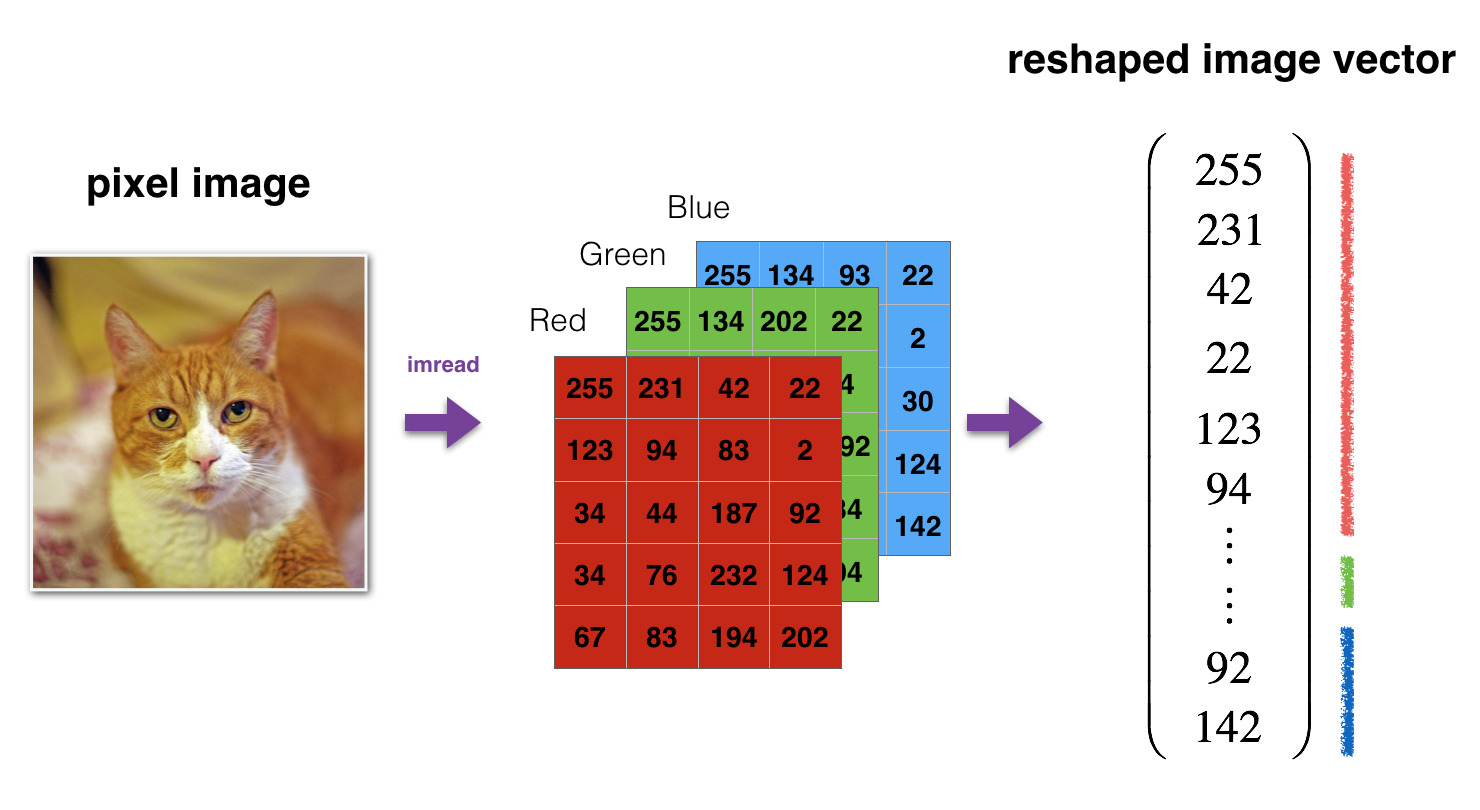

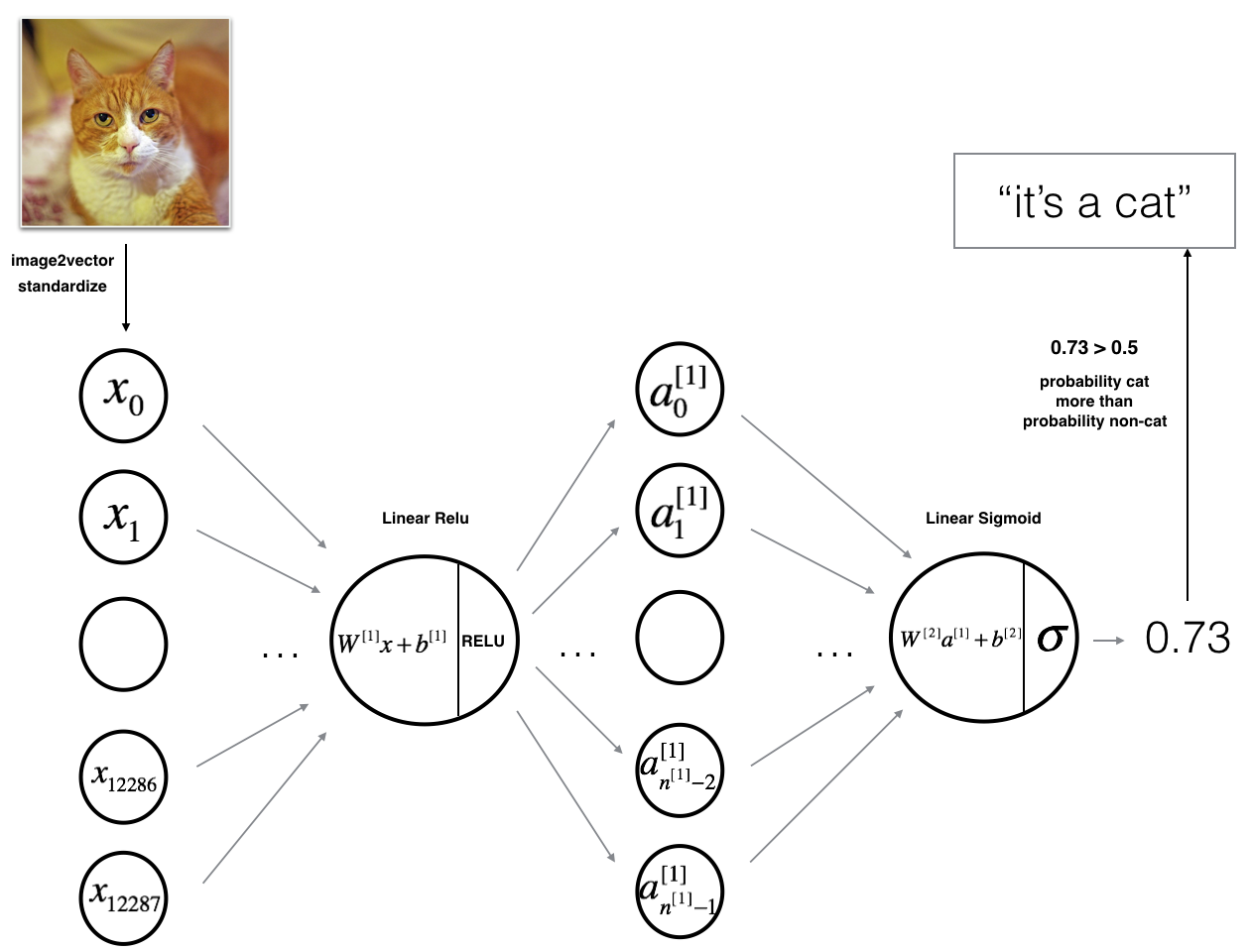

3.1 - 2-layer neural network

The model can be summarized as: INPUT -> LINEAR -> RELU -> LINEAR -> SIGMOID -> OUTPUT.

Detailed Architecture of figure 2:

- The input is a (64,64,3) image which is flattened to a vector of size .

- The corresponding vector: $$[x_0,x_1,…,x_{12287}]^T$$ is then multiplied by the weight matrix of size .

- You then add a bias term and take its relu to get the following vector: $$[a_0^{[1]}, a_1^{[1]},…, a_{n^{[1]}-1}^{[1]}]^T$$.

- You then repeat the same process.

- You multiply the resulting vector by and add your intercept (bias).

- Finally, you take the sigmoid of the result. If it is greater than 0.5, you classify it to be a cat.

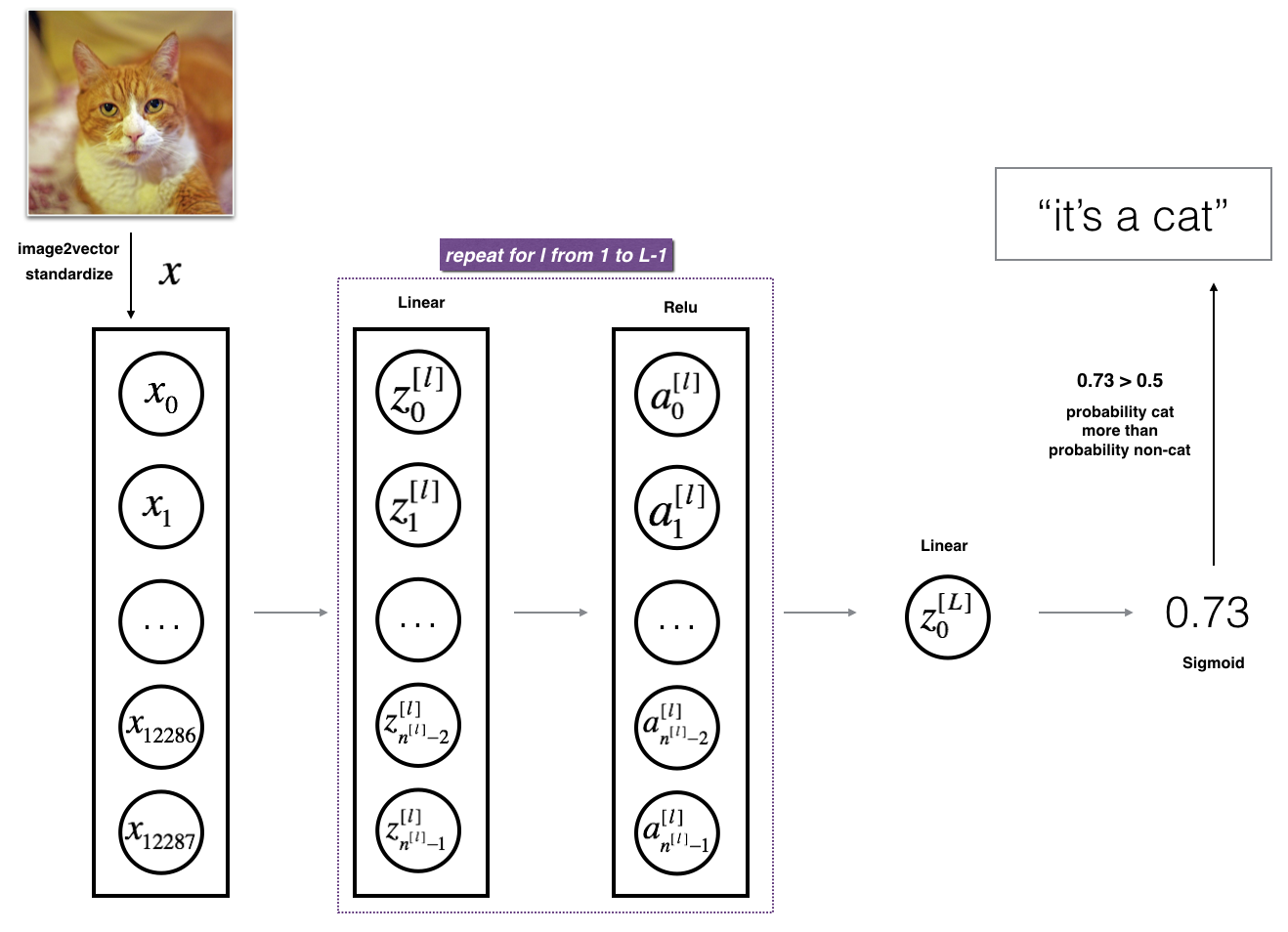

3.2 - L-layer deep neural network

It is hard to represent an L-layer deep neural network with the above representation. However, here is a simplified network representation:

The model can be summarized as: [LINEAR -> RELU] $\times$ (L-1) -> LINEAR -> SIGMOID

Detailed Architecture of figure 3:

- The input is a (64,64,3) image which is flattened to a vector of size (12288,1).

- The corresponding vector: $$[x_0,x_1,…,x_{12287}]^T$$ is then multiplied by the weight matrix and then you add the intercept . The result is called the linear unit.

- Next, you take the relu of the linear unit. This process could be repeated several times for each depending on the model architecture.

- Finally, you take the sigmoid of the final linear unit. If it is greater than 0.5, you classify it to be a cat.

3.3 - General methodology

As usual you will follow the Deep Learning methodology to build the model:

1. Initialize parameters / Define hyperparameters

2. Loop for num_iterations:

a. Forward propagation

b. Compute cost function

c. Backward propagation

d. Update parameters (using parameters, and grads from backprop)

4. Use trained parameters to predict labels

Let’s now implement those two models!

4 - Two-layer neural network

Question: Use the helper functions you have implemented in the previous assignment to build a 2-layer neural network with the following structure: LINEAR -> RELU -> LINEAR -> SIGMOID. The functions you may need and their inputs are:

1 | def initialize_parameters(n_x, n_h, n_y): |

1 | ### CONSTANTS DEFINING THE MODEL #### |

1 | # GRADED FUNCTION: two_layer_model |

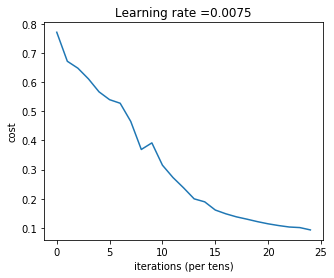

Run the cell below to train your parameters. See if your model runs. The cost should be decreasing. It may take up to 5 minutes to run 2500 iterations. Check if the “Cost after iteration 0” matches the expected output below, if not click on the square (⬛) on the upper bar of the notebook to stop the cell and try to find your error.

1 | parameters = two_layer_model(train_x, train_y, layers_dims = (n_x, n_h, n_y), num_iterations = 2500, print_cost=True) |

Cost after iteration 0: 0.6930497356599888

Cost after iteration 100: 0.6464320953428849

Cost after iteration 200: 0.6325140647912677

Cost after iteration 300: 0.6015024920354665

Cost after iteration 400: 0.5601966311605747

Cost after iteration 500: 0.5158304772764729

Cost after iteration 600: 0.4754901313943325

Cost after iteration 700: 0.43391631512257495

Cost after iteration 800: 0.4007977536203885

Cost after iteration 900: 0.3580705011323798

Cost after iteration 1000: 0.33942815383664127

Cost after iteration 1100: 0.30527536361962665

Cost after iteration 1200: 0.27491377282130164

Cost after iteration 1300: 0.24681768210614857

Cost after iteration 1400: 0.1985073503746608

Cost after iteration 1500: 0.17448318112556627

Cost after iteration 1600: 0.17080762978096517

Cost after iteration 1700: 0.11306524562164728

Cost after iteration 1800: 0.09629426845937158

Cost after iteration 1900: 0.0834261795972687

Cost after iteration 2000: 0.07439078704319084

Cost after iteration 2100: 0.06630748132267934

Cost after iteration 2200: 0.059193295010381744

Cost after iteration 2300: 0.053361403485605606

Cost after iteration 2400: 0.04855478562877019

Expected Output:

| Cost after iteration 0 | 0.6930497356599888 |

| Cost after iteration 100 | 0.6464320953428849 |

| ... | ... |

| Cost after iteration 2400 | 0.048554785628770206 |

Good thing you built a vectorized implementation! Otherwise it might have taken 10 times longer to train this.

Now, you can use the trained parameters to classify images from the dataset. To see your predictions on the training and test sets, run the cell below.

1 | predictions_train = predict(train_x, train_y, parameters) |

Accuracy: 1.0

Expected Output:

| Accuracy | 1.0 |

1 | predictions_test = predict(test_x, test_y, parameters) |

Accuracy: 0.72

Expected Output:

| Accuracy | 0.72 |

Note: You may notice that running the model on fewer iterations (say 1500) gives better accuracy on the test set. This is called “early stopping” and we will talk about it in the next course. Early stopping is a way to prevent overfitting.

Congratulations! It seems that your 2-layer neural network has better performance (72%) than the logistic regression implementation (70%, assignment week 2). Let’s see if you can do even better with an -layer model.

5 - L-layer Neural Network

Question: Use the helper functions you have implemented previously to build an -layer neural network with the following structure: [LINEAR -> RELU](L-1) -> LINEAR -> SIGMOID. The functions you may need and their inputs are:

1 | def initialize_parameters_deep(layer_dims): |

1 | ### CONSTANTS ### |

1 | # GRADED FUNCTION: L_layer_model |

You will now train the model as a 5-layer neural network.

Run the cell below to train your model. The cost should decrease on every iteration. It may take up to 5 minutes to run 2500 iterations. Check if the “Cost after iteration 0” matches the expected output below, if not click on the square (⬛) on the upper bar of the notebook to stop the cell and try to find your error.

1 | parameters = L_layer_model(train_x, train_y, layers_dims, num_iterations = 2500, print_cost = True) |

Cost after iteration 0: 0.771749

Cost after iteration 100: 0.672053

Cost after iteration 200: 0.648263

Cost after iteration 300: 0.611507

Cost after iteration 400: 0.567047

Cost after iteration 500: 0.540138

Cost after iteration 600: 0.527930

Cost after iteration 700: 0.465477

Cost after iteration 800: 0.369126

Cost after iteration 900: 0.391747

Cost after iteration 1000: 0.315187

Cost after iteration 1100: 0.272700

Cost after iteration 1200: 0.237419

Cost after iteration 1300: 0.199601

Cost after iteration 1400: 0.189263

Cost after iteration 1500: 0.161189

Cost after iteration 1600: 0.148214

Cost after iteration 1700: 0.137775

Cost after iteration 1800: 0.129740

Cost after iteration 1900: 0.121225

Cost after iteration 2000: 0.113821

Cost after iteration 2100: 0.107839

Cost after iteration 2200: 0.102855

Cost after iteration 2300: 0.100897

Cost after iteration 2400: 0.092878

Expected Output:

| Cost after iteration 0 | 0.771749 |

| Cost after iteration 100 | 0.672053 |

| ... | ... |

| Cost after iteration 2400 | 0.092878 |

1 | pred_train = predict(train_x, train_y, parameters) |

Accuracy: 0.985645933014

| Train Accuracy | 0.985645933014 |

1 | pred_test = predict(test_x, test_y, parameters) |

Accuracy: 0.8

Expected Output:

| Test Accuracy | 0.8 |

Congrats! It seems that your 5-layer neural network has better performance (80%) than your 2-layer neural network (72%) on the same test set.

This is good performance for this task. Nice job!

Though in the next course on “Improving deep neural networks” you will learn how to obtain even higher accuracy by systematically searching for better hyperparameters (learning_rate, layers_dims, num_iterations, and others you’ll also learn in the next course).

6) Results Analysis

First, let’s take a look at some images the L-layer model labeled incorrectly. This will show a few mislabeled images.

1 | print_mislabeled_images(classes, test_x, test_y, pred_test) |

A few type of images the model tends to do poorly on include:

- Cat body in an unusual position

- Cat appears against a background of a similar color

- Unusual cat color and species

- Camera Angle

- Brightness of the picture

- Scale variation (cat is very large or small in image)

References:

- for auto-reloading external module: http://stackoverflow.com/questions/1907993/autoreload-of-modules-in-ipython