deeplearning.ai homework:Class 1 Week 4 assignment4_1

Building your Deep Neural Network: Step by Step

Welcome to your week 4 assignment (part 1 of 2)! You have previously trained a 2-layer Neural Network (with a single hidden layer). This week, you will build a deep neural network, with as many layers as you want!

- In this notebook, you will implement all the functions required to build a deep neural network.

- In the next assignment, you will use these functions to build a deep neural network for image classification.

After this assignment you will be able to:

- Use non-linear units like ReLU to improve your model

- Build a deeper neural network (with more than 1 hidden layer)

- Implement an easy-to-use neural network class

Notation:

- Superscript denotes a quantity associated with the layer.

- Example: is the layer activation. and are the layer parameters.

- Superscript denotes a quantity associated with the example.

- Example: is the training example.

- Lowerscript denotes the entry of a vector.

- Example: denotes the entry of the layer’s activations).

Let’s get started!

1 - Packages

Let’s first import all the packages that you will need during this assignment.

- numpy is the main package for scientific computing with Python.

- matplotlib is a library to plot graphs in Python.

- dnn_utils provides some necessary functions for this notebook.

- testCases provides some test cases to assess the correctness of your functions

- np.random.seed(1) is used to keep all the random function calls consistent. It will help us grade your work. Please don’t change the seed.

1 | import numpy as np |

2 - Outline of the Assignment

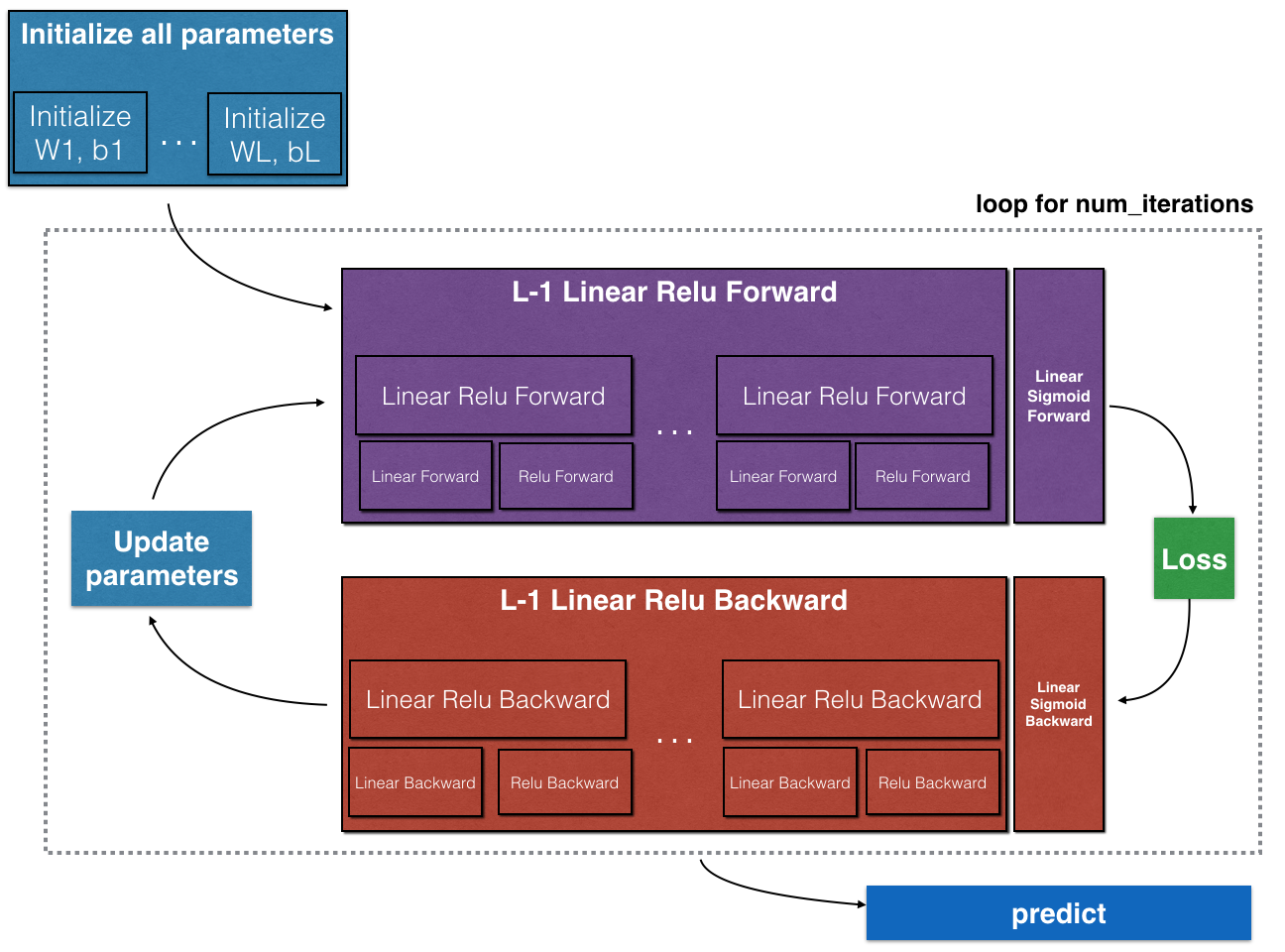

To build your neural network, you will be implementing several “helper functions”. These helper functions will be used in the next assignment to build a two-layer neural network and an L-layer neural network. Each small helper function you will implement will have detailed instructions that will walk you through the necessary steps. Here is an outline of this assignment, you will:

- Initialize the parameters for a two-layer network and for an -layer neural network.

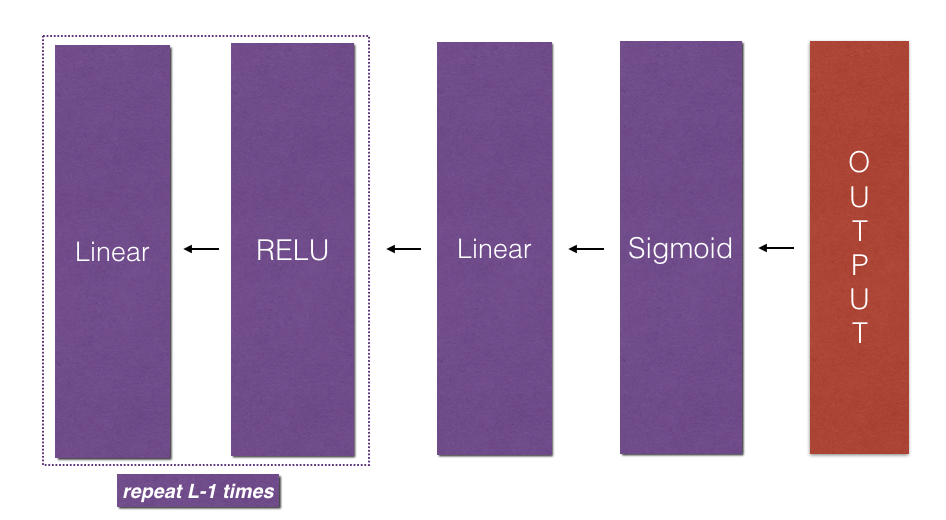

- Implement the forward propagation module (shown in purple in the figure below).

- Complete the LINEAR part of a layer’s forward propagation step (resulting in ).

- We give you the ACTIVATION function (relu/sigmoid).

- Combine the previous two steps into a new [LINEAR->ACTIVATION] forward function.

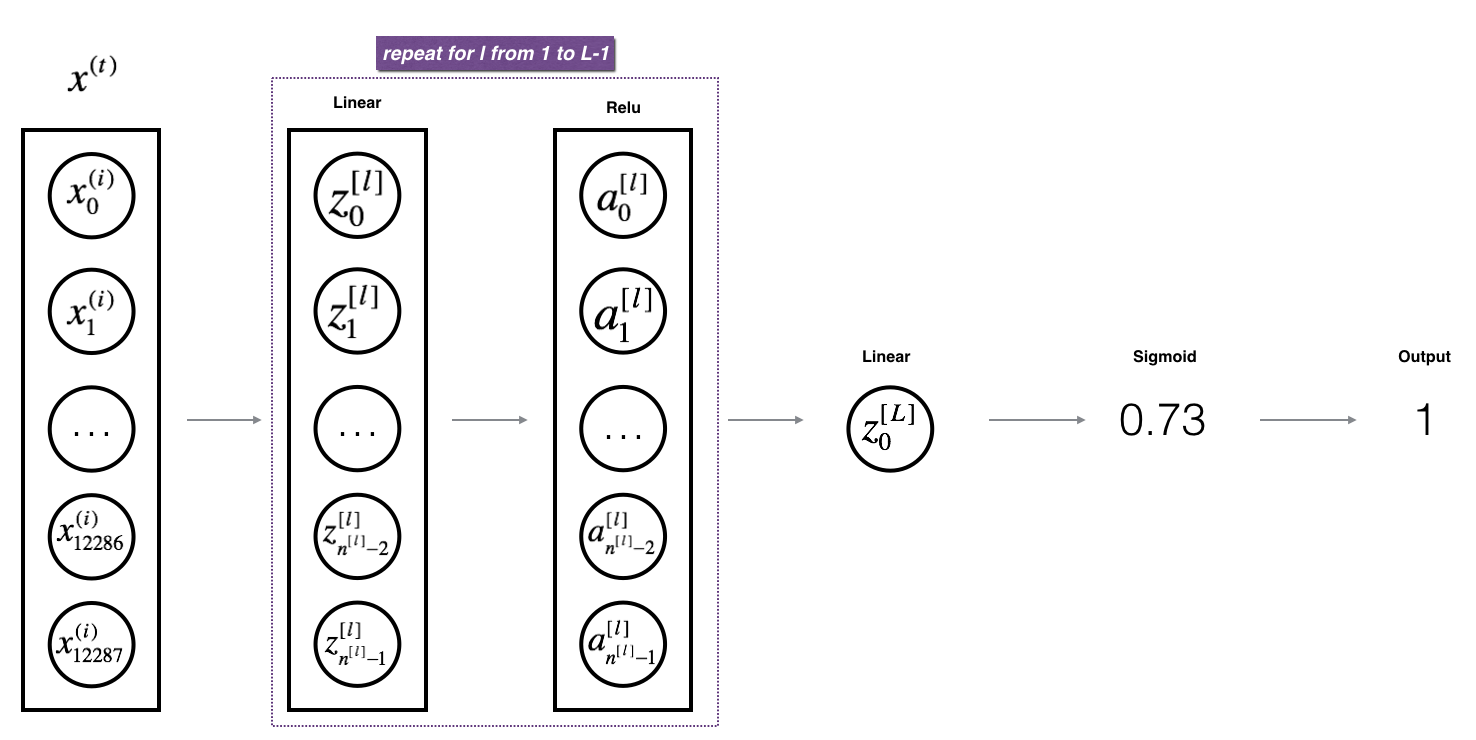

- Stack the [LINEAR->RELU] forward function L-1 time (for layers 1 through L-1) and add a [LINEAR->SIGMOID] at the end (for the final layer ). This gives you a new L_model_forward function.

- Compute the loss.

- Implement the backward propagation module (denoted in red in the figure below).

- Complete the LINEAR part of a layer’s backward propagation step.

- We give you the gradient of the ACTIVATE function (relu_backward/sigmoid_backward)

- Combine the previous two steps into a new [LINEAR->ACTIVATION] backward function.

- Stack [LINEAR->RELU] backward L-1 times and add [LINEAR->SIGMOID] backward in a new L_model_backward function

- Finally update the parameters.

Note that for every forward function, there is a corresponding backward function. That is why at every step of your forward module you will be storing some values in a cache. The cached values are useful for computing gradients. In the backpropagation module you will then use the cache to calculate the gradients. This assignment will show you exactly how to carry out each of these steps.

3 - Initialization

You will write two helper functions that will initialize the parameters for your model. The first function will be used to initialize parameters for a two layer model. The second one will generalize this initialization process to layers.

3.1 - 2-layer Neural Network

Exercise: Create and initialize the parameters of the 2-layer neural network.

Instructions:

- The model’s structure is: LINEAR -> RELU -> LINEAR -> SIGMOID.

- Use random initialization for the weight matrices. Use

np.random.randn(shape)*0.01with the correct shape. - Use zero initialization for the biases. Use

np.zeros(shape).

1 | # GRADED FUNCTION: initialize_parameters |

1 | parameters = initialize_parameters(2,2,1) |

W1 = [[ 0.01624345 -0.00611756]

[-0.00528172 -0.01072969]]

b1 = [[ 0.]

[ 0.]]

W2 = [[ 0.00865408 -0.02301539]]

b2 = [[ 0.]]

Expected output:

| W1 | [[ 0.01624345 -0.00611756] [-0.00528172 -0.01072969]] |

| b1 | [[ 0.] [ 0.]] |

| W2 | [[ 0.00865408 -0.02301539]] |

| b2 | [[ 0.]] |

3.2 - L-layer Neural Network

The initialization for a deeper L-layer neural network is more complicated because there are many more weight matrices and bias vectors. When completing the initialize_parameters_deep, you should make sure that your dimensions match between each layer. Recall that is the number of units in layer . Thus for example if the size of our input is (with examples) then:

| Layer L-1 | $(n^{[L-1]}, n^{[L-2]})$ | $(n^{[L-1]}, 1)$ | $Z^{[L-1]} = W^{[L-1]} A^{[L-2]} + b^{[L-1]}$ | $(n^{[L-1]}, 209)$ |

| Layer L | $(n^{[L]}, n^{[L-1]})$ | $(n^{[L]}, 1)$ | $Z^{[L]} = W^{[L]} A^{[L-1]} + b^{[L]}$ | $(n^{[L]}, 209)$ |

Remember that when we compute in python, it carries out broadcasting. For example, if:

Then will be:

Exercise: Implement initialization for an L-layer Neural Network.

Instructions:

- The model’s structure is [LINEAR -> RELU] $ \times$ (L-1) -> LINEAR -> SIGMOID. I.e., it has layers using a ReLU activation function followed by an output layer with a sigmoid activation function.

- Use random initialization for the weight matrices. Use

np.random.rand(shape) * 0.01. - Use zeros initialization for the biases. Use

np.zeros(shape). - We will store , the number of units in different layers, in a variable

layer_dims. For example, thelayer_dimsfor the “Planar Data classification model” from last week would have been [2,4,1]: There were two inputs, one hidden layer with 4 hidden units, and an output layer with 1 output unit. Thus meansW1’s shape was (4,2),b1was (4,1),W2was (1,4) andb2was (1,1). Now you will generalize this to layers! - Here is the implementation for (one layer neural network). It should inspire you to implement the general case (L-layer neural network).

1 | if L == 1: |

1 | # GRADED FUNCTION: initialize_parameters_deep |

1 | parameters = initialize_parameters_deep([5,4,3]) |

W1 = [[ 0.01788628 0.0043651 0.00096497 -0.01863493 -0.00277388]

[-0.00354759 -0.00082741 -0.00627001 -0.00043818 -0.00477218]

[-0.01313865 0.00884622 0.00881318 0.01709573 0.00050034]

[-0.00404677 -0.0054536 -0.01546477 0.00982367 -0.01101068]]

b1 = [[ 0.]

[ 0.]

[ 0.]

[ 0.]]

W2 = [[-0.01185047 -0.0020565 0.01486148 0.00236716]

[-0.01023785 -0.00712993 0.00625245 -0.00160513]

[-0.00768836 -0.00230031 0.00745056 0.01976111]]

b2 = [[ 0.]

[ 0.]

[ 0.]]

Expected output:

| W1 | [[ 0.01788628 0.0043651 0.00096497 -0.01863493 -0.00277388] [-0.00354759 -0.00082741 -0.00627001 -0.00043818 -0.00477218] [-0.01313865 0.00884622 0.00881318 0.01709573 0.00050034] [-0.00404677 -0.0054536 -0.01546477 0.00982367 -0.01101068]] |

| b1 | [[ 0.] [ 0.] [ 0.] [ 0.]] |

| W2 | [[-0.01185047 -0.0020565 0.01486148 0.00236716] [-0.01023785 -0.00712993 0.00625245 -0.00160513] [-0.00768836 -0.00230031 0.00745056 0.01976111]] |

| b2 | [[ 0.] [ 0.] [ 0.]] |

4 - Forward propagation module

4.1 - Linear Forward

Now that you have initialized your parameters, you will do the forward propagation module. You will start by implementing some basic functions that you will use later when implementing the model. You will complete three functions in this order:

- LINEAR

- LINEAR -> ACTIVATION where ACTIVATION will be either ReLU or Sigmoid.

- [LINEAR -> RELU] (L-1) -> LINEAR -> SIGMOID (whole model)

The linear forward module (vectorized over all the examples) computes the following equations:

where .

Exercise: Build the linear part of forward propagation.

Reminder:

The mathematical representation of this unit is . You may also find np.dot() useful. If your dimensions don’t match, printing W.shape may help.

1 | # GRADED FUNCTION: linear_forward |

1 | A, W, b = linear_forward_test_case() |

Z = [[ 3.26295337 -1.23429987]]

Expected output:

| Z | [[ 3.26295337 -1.23429987]] |

4.2 - Linear-Activation Forward

In this notebook, you will use two activation functions:

- Sigmoid: . We have provided you with the

sigmoidfunction. This function returns two items: the activation value “a” and a “cache” that contains “Z” (it’s what we will feed in to the corresponding backward function). To use it you could just call:

1 | A, activation_cache = sigmoid(Z) |

- ReLU: The mathematical formula for ReLu is . We have provided you with the

relufunction. This function returns two items: the activation value “A” and a “cache” that contains “Z” (it’s what we will feed in to the corresponding backward function). To use it you could just call:

1 | A, activation_cache = relu(Z) |

For more convenience, you are going to group two functions (Linear and Activation) into one function (LINEAR->ACTIVATION). Hence, you will implement a function that does the LINEAR forward step followed by an ACTIVATION forward step.

Exercise: Implement the forward propagation of the LINEAR->ACTIVATION layer. Mathematical relation is: where the activation “g” can be sigmoid() or relu(). Use linear_forward() and the correct activation function.

1 | # GRADED FUNCTION: linear_activation_forward |

1 | A_prev, W, b = linear_activation_forward_test_case() |

With sigmoid: A = [[ 0.96890023 0.11013289]]

With ReLU: A = [[ 3.43896131 0. ]]

Expected output:

| With sigmoid: A | [[ 0.96890023 0.11013289]] |

| With ReLU: A | [[ 3.43896131 0. ]] |

Note: In deep learning, the “[LINEAR->ACTIVATION]” computation is counted as a single layer in the neural network, not two layers.

d) L-Layer Model

For even more convenience when implementing the -layer Neural Net, you will need a function that replicates the previous one (linear_activation_forward with RELU) times, then follows that with one linear_activation_forward with SIGMOID.

Exercise: Implement the forward propagation of the above model.

Instruction: In the code below, the variable AL will denote . (This is sometimes also called Yhat, i.e., this is .)

Tips:

- Use the functions you had previously written

- Use a for loop to replicate [LINEAR->RELU] (L-1) times

- Don’t forget to keep track of the caches in the “caches” list. To add a new value

cto alist, you can uselist.append(c).

1 | # GRADED FUNCTION: L_model_forward |

1 | X, parameters = L_model_forward_test_case() |

AL = [[ 0.17007265 0.2524272 ]]

Length of caches list = 2

| AL | [[ 0.17007265 0.2524272 ]] |

| Length of caches list | 2 |

Great! Now you have a full forward propagation that takes the input X and outputs a row vector containing your predictions. It also records all intermediate values in “caches”. Using , you can compute the cost of your predictions.

5 - Cost function

Now you will implement forward and backward propagation. You need to compute the cost, because you want to check if your model is actually learning.

Exercise: Compute the cross-entropy cost , using the following formula: $$-\frac{1}{m} \sum\limits_{i = 1}^{m} (y^{(i)}\log\left(a^{[L] (i)}\right) + (1-y^{(i)})\log\left(1- a^{L}\right)) \tag{7}$$

1 | # GRADED FUNCTION: compute_cost |

1 | Y, AL = compute_cost_test_case() |

cost = 0.414931599615

Expected Output:

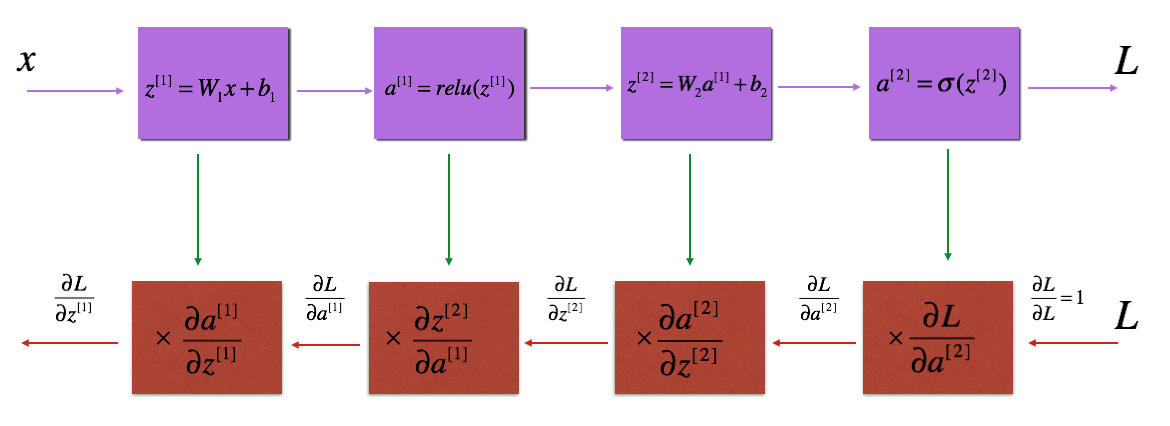

6 - Backward propagation module

Just like with forward propagation, you will implement helper functions for backpropagation. Remember that back propagation is used to calculate the gradient of the loss function with respect to the parameters.

Reminder:

The purple blocks represent the forward propagation, and the red blocks represent the backward propagation.

Now, similar to forward propagation, you are going to build the backward propagation in three steps:

- LINEAR backward

- LINEAR -> ACTIVATION backward where ACTIVATION computes the derivative of either the ReLU or sigmoid activation

- [LINEAR -> RELU] (L-1) -> LINEAR -> SIGMOID backward (whole model)

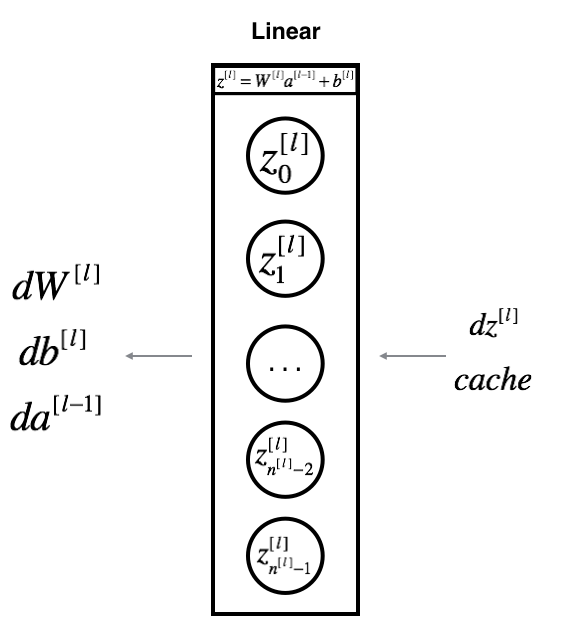

6.1 - Linear backward

For layer , the linear part is: (followed by an activation).

Suppose you have already calculated the derivative . You want to get .

The three outputs are computed using the input .Here are the formulas you need:

Exercise: Use the 3 formulas above to implement linear_backward().

1 | # GRADED FUNCTION: linear_backward |

1 | # Set up some test inputs |

dA_prev = [[ 0.51822968 -0.19517421]

[-0.40506361 0.15255393]

[ 2.37496825 -0.89445391]]

dW = [[-0.10076895 1.40685096 1.64992505]]

db = [[ 0.50629448]]

Expected Output:

| dA_prev | [[ 0.51822968 -0.19517421] [-0.40506361 0.15255393] [ 2.37496825 -0.89445391]] |

6.2 - Linear-Activation backward

Next, you will create a function that merges the two helper functions: linear_backward and the backward step for the activation linear_activation_backward.

To help you implement linear_activation_backward, we provided two backward functions:

sigmoid_backward: Implements the backward propagation for SIGMOID unit. You can call it as follows:

1 | dZ = sigmoid_backward(dA, activation_cache) |

relu_backward: Implements the backward propagation for RELU unit. You can call it as follows:

1 | dZ = relu_backward(dA, activation_cache) |

If is the activation function,

sigmoid_backward and relu_backward compute $$dZ^{[l]} = dA^{[l]} * g’(Z^{[l]}) \tag{11}$$.

Exercise: Implement the backpropagation for the LINEAR->ACTIVATION layer.

1 | # GRADED FUNCTION: linear_activation_backward |

1 | AL, linear_activation_cache = linear_activation_backward_test_case() |

sigmoid:

dA_prev = [[ 0.11017994 0.01105339]

[ 0.09466817 0.00949723]

[-0.05743092 -0.00576154]]

dW = [[ 0.10266786 0.09778551 -0.01968084]]

db = [[-0.05729622]]

relu:

dA_prev = [[ 0.44090989 0. ]

[ 0.37883606 0. ]

[-0.2298228 0. ]]

dW = [[ 0.44513824 0.37371418 -0.10478989]]

db = [[-0.20837892]]

Expected output with sigmoid:

| dA_prev | [[ 0.11017994 0.01105339] [ 0.09466817 0.00949723] [-0.05743092 -0.00576154]] |

Expected output with relu

| dA_prev | [[ 0.44090989 0. ] [ 0.37883606 0. ] [-0.2298228 0. ]] |

6.3 - L-Model Backward

Now you will implement the backward function for the whole network. Recall that when you implemented the L_model_forward function, at each iteration, you stored a cache which contains (X,W,b, and z). In the back propagation module, you will use those variables to compute the gradients. Therefore, in the L_model_backward function, you will iterate through all the hidden layers backward, starting from layer . On each step, you will use the cached values for layer to backpropagate through layer . Figure 5 below shows the backward pass.

** Initializing backpropagation**:

To backpropagate through this network, we know that the output is,

. Your code thus needs to compute dAL .

To do so, use this formula (derived using calculus which you don’t need in-depth knowledge of):

1 | dAL = - (np.divide(Y, AL) - np.divide(1 - Y, 1 - AL)) # derivative of cost with respect to AL |

You can then use this post-activation gradient dAL to keep going backward. As seen in Figure 5, you can now feed in dAL into the LINEAR->SIGMOID backward function you implemented (which will use the cached values stored by the L_model_forward function). After that, you will have to use a for loop to iterate through all the other layers using the LINEAR->RELU backward function. You should store each dA, dW, and db in the grads dictionary. To do so, use this formula :

For example, for this would store in grads["dW3"].

Exercise: Implement backpropagation for the [LINEAR->RELU] (L-1) -> LINEAR -> SIGMOID model.

1 | # GRADED FUNCTION: L_model_backward |

1 | AL, Y_assess, caches = L_model_backward_test_case() |

dW1 = [[ 0.41010002 0.07807203 0.13798444 0.10502167]

[ 0. 0. 0. 0. ]

[ 0.05283652 0.01005865 0.01777766 0.0135308 ]]

db1 = [[-0.22007063]

[ 0. ]

[-0.02835349]]

dA1 = [[ 0. 0.52257901]

[ 0. -0.3269206 ]

[ 0. -0.32070404]

[ 0. -0.74079187]]

Expected Output

| dW1 | [[ 0.41010002 0.07807203 0.13798444 0.10502167] [ 0. 0. 0. 0. ] [ 0.05283652 0.01005865 0.01777766 0.0135308 ]] |

| dA1 | [[ 0. 0.52257901] [ 0. -0.3269206 ] [ 0. -0.32070404] [ 0. -0.74079187]] |

6.4 - Update Parameters

In this section you will update the parameters of the model, using gradient descent:

where is the learning rate. After computing the updated parameters, store them in the parameters dictionary.

Exercise: Implement update_parameters() to update your parameters using gradient descent.

Instructions:

Update parameters using gradient descent on every and for .

1 | # GRADED FUNCTION: update_parameters |

1 | parameters, grads = update_parameters_test_case() |

W1 = [[-0.59562069 -0.09991781 -2.14584584 1.82662008]

[-1.76569676 -0.80627147 0.51115557 -1.18258802]

[-1.0535704 -0.86128581 0.68284052 2.20374577]]

b1 = [[-0.04659241]

[-1.28888275]

[ 0.53405496]]

W2 = [[-0.55569196 0.0354055 1.32964895]]

b2 = [[-0.84610769]]

Expected Output:

| W1 | [[-0.59562069 -0.09991781 -2.14584584 1.82662008] [-1.76569676 -0.80627147 0.51115557 -1.18258802] [-1.0535704 -0.86128581 0.68284052 2.20374577]] |

| W2 | [[-0.55569196 0.0354055 1.32964895]] |

7 - Conclusion

Congrats on implementing all the functions required for building a deep neural network!

We know it was a long assignment but going forward it will only get better. The next part of the assignment is easier.

In the next assignment you will put all these together to build two models:

- A two-layer neural network

- An L-layer neural network

You will in fact use these models to classify cat vs non-cat images!